When AI Recruiting Goes Wrong: The Eightfold Lawsuit and the Case for Structured People Data

A class action filed this week reveals what happens when AI hiring tools skip data maturity, and why the solution isn’t better algorithms, but better data.

On January 21st, two job seekers filed a proposed class action against Eightfold AI, alleging the company builds “dossiers” on candidates using scraped social media profiles, location data, and internet activity, then ranks them without their knowledge or consent. (Read the full story at HR Dive. The full complaint is available from Outten & Golden.)

The complaint’s opening paragraph sets out the plaintiffs/claimants’ core allegation: “This case is about how Defendant Eightfold AI Inc. uses hidden Artificial Intelligence technology to collect sensitive and often inaccurate information about unsuspecting job applicants and to score them from 0 to 5 for potential employers based on their supposed ‘likelihood of success’ on the job.”

According to the complaint filed in the State Superior Court of California, Eightfold’s software allegedly collected personal information from unverified third-party sources, including social media profiles, location data, internet and device activity, and cookies, then processed this data through a proprietary large learning model to rank candidates on “likelihood of success.” The two plaintiffs/claimants, represented by law firm Outten & Golden LLP, brought the lawsuit because they believe they were screened out of positions for which they were qualified, with no visibility into why. (CIO)

The complaint alleges that a number of large enterprise companies use Eightfold AI in their candidate screening process, and that two US state labour departments also offer Eightfold-powered platforms for job seekers. (Outten & Golden.)

The plaintiffs/claimants allege that Eightfold’s AI-generated reports describe personality traits like “team player” and “introvert,” and predict future job titles and career trajectories, all from data the candidates never provided and cannot see or dispute. (Reuters via Yahoo Finance)

While the lawsuit focuses on external recruitment, Eightfold’s platform extends across the full employee lifecycle, including internal mobility, workforce planning, and career development. The company announced a “Digital Twin” product in May 2025 designed to capture employee data by integrating across email, messaging platforms, CRMs, and project management tools. (Eightfold press release via PR Newswire) The questions raised by the lawsuit, around consent, transparency, and individual access, are relevant consideration for any platform that profiles and scores workers or candidates going forwards.

This isn’t a story about AI gone rogue. It’s a story about what happens when organisations try to find meaning in meaningless unstructured data.

The wrong use of AI

The Eightfold lawsuit illustrates a pattern we see across AI-enabled talent acquisition: collect as much unstructured data as possible, apply NLP and machine learning, and hope signal emerges from noise.

The complaint describes how, according to the plaintiffs/claimants, “Eightfold’s technology lurks in the background of job applications for thousands of applicants who may not even know Eightfold exists,” allegedly collecting scraped unstructured People data to create a profile about the candidate’s behaviour, attitudes, human capability intelligence, aptitudes and other characteristics that applicants never included in their job application.

But scraped social media profiles, cookies, and internet activity aren’t validated capability data. They’re proxies, often outdated, frequently inaccurate, and inherently gameable. When AI interprets this noise as if it were intelligence, the risk is exactly what the plaintiffs/claimants describe: scores based on information candidates can’t see, verify, or dispute, and qualified people potentially screened out of roles they could have excelled in.

The human cost, as the plaintiffs/claimants put it: “These job applicants have no meaningful opportunity to review or dispute Eightfold’s AI-generated report before it informs a decision about one of the most important aspects of their lives: whether or not they get a job.”

This is the Data Readiness Level (DRL) 3-5 trap. The data exists. It can be processed. But it was never designed to answer the question being asked.

The right use of AI

The alternative isn’t less AI, it’s better data.

Lumenai LABS, the academic branch of Lumenai, launched Data Maturity Matters (DMM) in October 2025 to address this emerging issue. DMM is the product of 12 months of intensive academic research into People Data maturity, the history of analytics maturity models and the emerging use of AI Enabled Workforce Intelligence. The research examined how, without mature structured data, People Analytics remains heavily reliant on quantitative and numerical data for its Predictive and Prescriptive ambitions, and how this gap in data maturity is precisely why companies like Eightfold are scraping unstructured data and modelling it with AI to produce scores. DMM maps the direction of travel for moving from unstructured to structured People Data practices that will rapidly progress People Analytics capabilities from Descriptive to Predictive and Prescriptive across both qualitative and quantitative people data, and ethical consent based AI Enabled Workforce Intelligence.

As an academically driven vendor, incubated at Oxford University Innovation and committed to academic rigour, we believed that releasing these academically informed People Data maturity standards, frameworks, and toolkits as open-source was essential. At this moment in the progress of human history, when AI is fundamentally reshaping how organisations understand and develop their people, it is essential that all stakeholders can engage with, validate, and apply an academically rigourous common language framework to their strategy and practices around consent, People data standards, and maturity. This cannot, we believe, be proprietary. It must be a movement in awareness and education, not a monetised product. (Explore the framework at www.datamaturitymatters.tech. For the research foundations, see Addressing the 85% AI Failure Rate: Why We Have Built an Open-Source Data Maturity Toolkit and Consortium.)

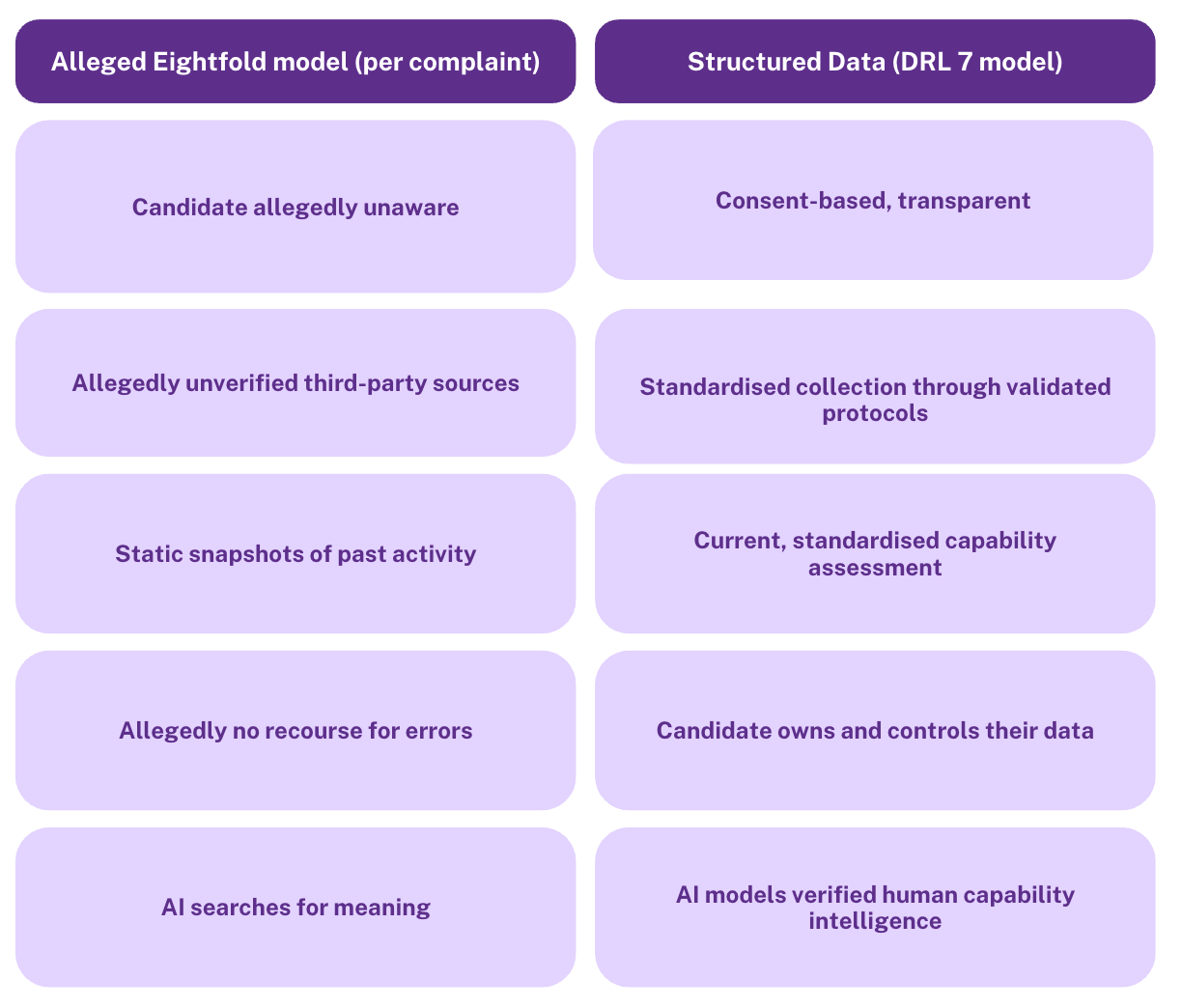

The Data Readiness Level (DRL) framework at the heart of DMM addresses precisely the problems this Eightfold lawsuit exposes. DRL 7 protocols enable structured data collection that produces consent based, ethical, candidate and employee capability data designed for intelligent consumption from first contact. (For the full technical architecture, see our piece on Data Readiness Levels: The Technical Architecture Behind People Data Maturity.)

The difference:

At Lumenai, we follow DMM standards. We are actively building a use case where candidates themselves create their own DRL 7 structured capability data, data they own, that can’t be gamed by AI-generated CVs, and that gives organisations actual capability signal instead of algorithmic guesswork. Our DRL 7 Use Case Initiative launches at Oxford Edge, Oxford University, on 2nd February 2006, where we will be researching and putting under scrutiny our consent-based DRL 7 candidate data collection and portable digital credentials (more info on how to join us/the conversation the end of the article).

Why this matters now: Industry 5.0 and the regulatory shift

This lawsuit arrives at a pivotal moment. The European Commission’s Industry 5.0 framework explicitly calls for a human-centric approach to technology, one that supports and empowers workers rather than treating them as data points to be mined. The Commission’s vision places “the wellbeing of the industry worker at the centre of the production process” and emphasises that digital technologies should “support and empower, rather than replace, workers.” (European Commission) Consent-based data collection isn’t just ethically preferable, it’s becoming the foundation of sustainable competitive advantage.

The regulatory environment is also catching up. Under the EU AI Act, which entered into force in August 2024, AI systems used for recruitment and employment decisions are classified as high-risk. Annex III explicitly lists “AI systems intended to be used for the recruitment or selection of natural persons, in particular to place targeted job advertisements, to analyse and filter job applications, and to evaluate candidates” as high-risk applications, alongside “AI systems intended to be used to make decisions affecting terms of work-related relationships, the promotion or termination of work-related contractual relationships, to allocate tasks based on individual behaviour or personal traits or characteristics or to monitor and evaluate the performance and behaviour of persons in such relationships.” (EU AI Act, Annex III) This triggers stringent requirements: employers must inform candidates and employees about AI usage, ensure human oversight, maintain transparency about how decisions are made, and give individuals the right to request explanations. As of February 2025, certain practices, including emotion recognition in workplaces and untargeted scraping to build facial recognition databases without consent, are outright prohibited. (EU AI Act Summary)

The direction of travel is clear. Covert profiling, opaque algorithmic scoring, and data collection without meaningful consent are not just ethically questionable, they are increasingly legally untenable.

Human skills as competitive advantage

The Eightfold lawsuit exposes a deeper problem: organisations want to understand human capabilities, but lack the structured data to do so. The response, as this case allegedly illustrates, has been to scrape whatever digital traces are available and hope AI can infer the rest.

The World Economic Forum’s December 2025 report, New Economy Skills: Unlocking the Human Advantage, identifies exactly this gap. Organisations worldwide lack standardised frameworks to assess, develop, and credential human-centric skills. The WEF calls explicitly for “standardised frameworks, scalable assessment tools, and clear pathways for recognition.”

Without such frameworks, the temptation to fill the void with scraped data and algorithmic inference is understandable, even if the results are, as the plaintiffs/claimants allege, inaccurate and opaque. The solution is not to abandon the goal of understanding human capability, but to build the structured data infrastructure that makes it possible to do so ethically and accurately.

This is precisely what Lumenai’s Human Capability Indexing (HCIx) delivers. By transforming qualitative human attributes into consent based structured People Data at DRL 7, HCIx provides the foundation that Predictive People Analytics and AI Enabled Workforce intelligence actually require, without resorting to covert profiling or unverified third-party sources. (For more on how HCIx addresses the WEF’s call, see Answering the WEF Call: How to Build Human-Centric Skills into Structured People Data that Delivers Competitive Advantage and how we do it see, HCIx Inside: The Foundation Layer for AI-Enabled Workforce Intelligence)

Transparency as the standard

At Lumenai, we believe we are at the forefront of what transparent, consent-based People data collection should look like, and what vendors across the industry should be doing. This is a revolution in vendor transparency and accountability. We are happy to champion that visibility and to be the first of what we hope will be many vendors working in full transparency about our consent-based solution and how it maps against validated, academically developed protocols that are reviewed and scrutinised by experts.

Our approach is open source, academically grounded through our incubation at Oxford University Innovation, and built on the principle that candidates and employees should consent to the collection of and share the ownership of their capability data. We invite scrutiny. We welcome comparison. Because if AI is going to shape who gets hired, promoted, and developed, the methodologies behind those decisions should be visible, understandable, and accountable. (For more on how we’re addressing the AI screening AI problem, see last week’s piece: AI Screening AI: Why We’re Launching the DRL 7 Use Case Initiative.)

The legal question, and the larger one

The Eightfold lawsuit hinges on whether these AI assessments constitute “consumer reports” under the Fair Credit Reporting Act, which would require disclosure, candidate access, and dispute rights. The CFPB supported this interpretation in 2024; the Trump administration rescinded that guidance in May 2025. (FindLaw)

But the legal question obscures the more fundamental problem: regardless of how this case is decided, the underlying data architecture issues it raises are ones the entire industry must address. You cannot build reliable candidate and workforce intelligence by mining unstructured data that was never designed to answer capability questions.

Join the conversation

We would love people to join this conversation, in person at our event at Oxford Edge, Oxford University, on 2nd February for the launch of the DRL 7 Use Case Initiative, or join the debate and work we are building at Data Maturity Matters (www.datamaturitymatters.tech).

Book your seat here: Eventbrite.

More information:

Join the People data maturity revolution: www.datamaturitymatters.tech

HCIx Inside: www.lumenai.tech